Since the 1950s, computer scientists have analyzed the growth of artificial intelligence (AI) and how systems can replicate human intelligence and problem-solving abilities. As the world continues to expand with its technology, people have built parasocial relationships with AI programs such as ChatGPT and Grammarly. People use AI assistants daily to ask Alexa and Siri various questions. In a time where technological possibilities seem endless, AI-generated images have showcased impressive feats in recent years. With programs like DALL·E 3 and Midjourney, people can now type prompts and their words can transform into a series of images in a matter of seconds. While it remains fascinating that technology can curate art, AI’s increasing accessibility to people can result in disturbing images that will impact various communities.

When OpenAI introduced programs such as DALL-E 2 to the public, people could not stop typing prompts and watching as their ideas appeared on a digital screen. AI art remains fascinating and useful as people use these programs to enhance artistic journeys and visual explorations. Even on The Chant, one published article utilized a cover image curated by AI programs. When people use AI art in an appropriate way that does not harm or misinform individuals, then people should freely type their prompts. However, due to technology’s increasing accessibility, people can use these programs in derogatory and perverted ways.

As new technological engines generate prompt-based images, AI companies allegedly utilize existing art to train their programs. As people became curious about the various art styles that they could use for their bizarre ideas, users distributed Disney AI posters on social media. The trend started with innocent pictures of pets, but it quickly devolved into users curating posters of the Holocaust and various other atrocities. Microsoft took swift action to restrict users from typing Disney into their AI search engines after Disney worried about copyright concerns.

However, the trend of showcasing explicit content through digital art drastically increased after the Pixar trend. People started typing on search engines about depictions of child abuse content and sexual objectification. Xochitl Gomez, a 17-year-old actress, spoke out about finding nonconsensual sexually explicit deepfakes with her face on social media. Gomez discussed on a podcast her discomfort regarding deepfakes and felt as if it invaded her privacy. Zubear Abdi, known for his notorious X account ZvBear, faced backlash on the website for sharing explicit images of billionaire Taylor Swift. The distribution of these images backfired on Abdi as he faced online scrutiny to the point where internet users notified the White House of the images. The controversial X user privated his account moments after.

“The extremely dark side of AI has been shown after what happened with Taylor Swift. Deepfakes have been used for fake political information, scams, and in the case of Taylor Swift, sexual content. As the capabilities of AI grow and as more unethical deepfake services are built, creating sexual content against anyone will become easier for malicious actors. Sadly, countless other people have been affected by deepfakes, including high schoolers and younger people. Hosting deepfake services and sharing content produced by them needs to be seen as a form of sexual harassment and criminal activity by our government. The UK has already criminalized sharing deepfake sexual content in 2023, and hopefully, the US follows with legislation too,” magnet senior Bradley Crasto said.

Amid a Palestinian genocide in the Gaza Strip, the state of Israel recently showcased propaganda advertisements on the streaming platform Hulu. In the advertisement, Israel reportedly broadcasted several AI depictions of Gazans and dramatically claimed that Hamas caused over 30,000 Palestinians to die. As Palestinians worry about displacement and their ability to live another day, the state of Israel created a controversial commercial with the intent to misinform the public.

In regard to misinformation, the popular newspaper “New York Post” published an absurd article regarding Jack the Ripper. Instead of illustrating proper journalism, the publication falsely claimed that AI programs finally revealed the notorious killer’s identity. People commonly use artificial art to create rapid images for entertainment; AI images do not count as reliable sources of information.

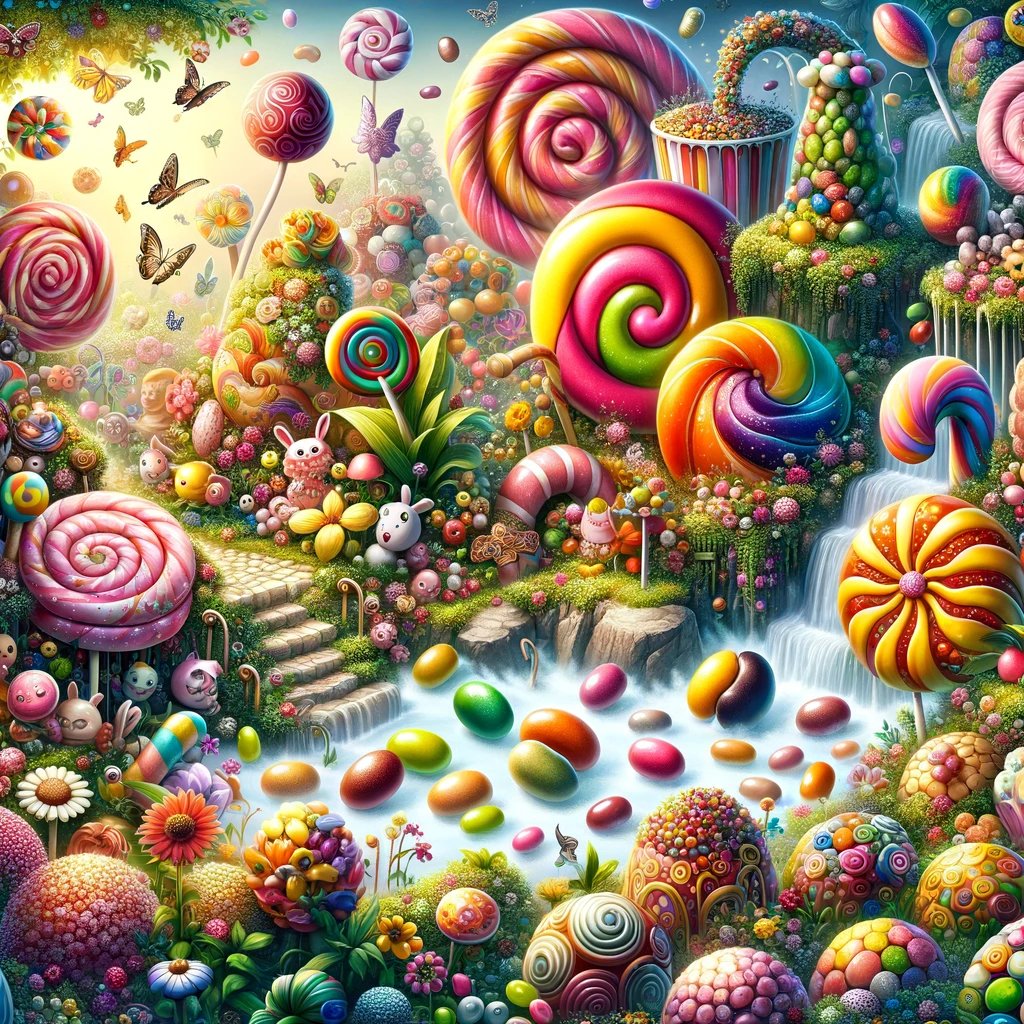

Located in a warehouse in Glasglow, Scotland, parents paid over $40 with the expectation that their families would experience the tale of Willy Wonka like never before. Willy’s Chocolate Experience promised to provide sweet treats and magical surprises to their guests, and parents took the advertisement at face value. However, parents managed to ignore a crucial detail about the event’s advertisements: every image from their website originated from digital art and artificial prompts. When families walked into the warehouse in late February, they walked into a barren landscape with a half-deflated bouncy castle and a limited amount of food. Although the event failed to distribute chocolate and provide accurate advertising, the event plastered AI-generated images of candy paradises across the warehouse.

“As image-creating AI makes more advancements, it’s going to become harder and harder to differentiate real pictures from fake ones. I used to make AI images to make funny pictures because of how obnoxious they looked but recently AI has been used in the entertainment industry because of how fast and easy it is to churn out whatever people want. In that failed Willy Wonka experience, they used AI to make scripts for the actors and images to lure people inside. If no restrictions are put in place soon AI will have grand consequences such as how easily people can create malicious pictures,” senior Emilio Medina said.

If lawmakers do not introduce bills that can properly regulate the distribution of fake images, then people need to learn how to distinguish real photos from AI-generated misinformation. As older generations struggle to catch up with understanding technology, younger generations should caution others about the detrimental effects of artificial intelligence. People need to inform their peers about the characteristics of digital images and report destructive photos.